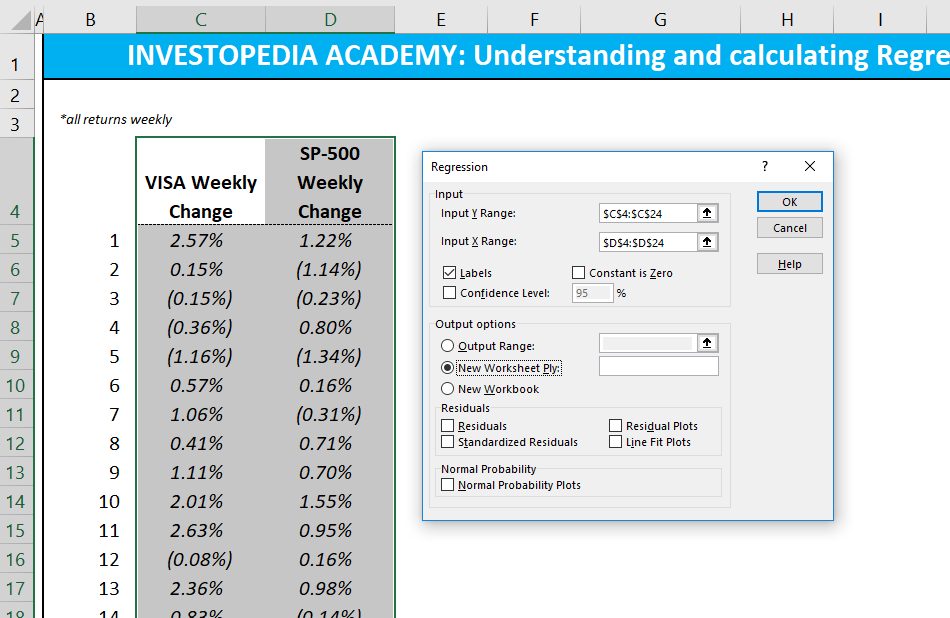

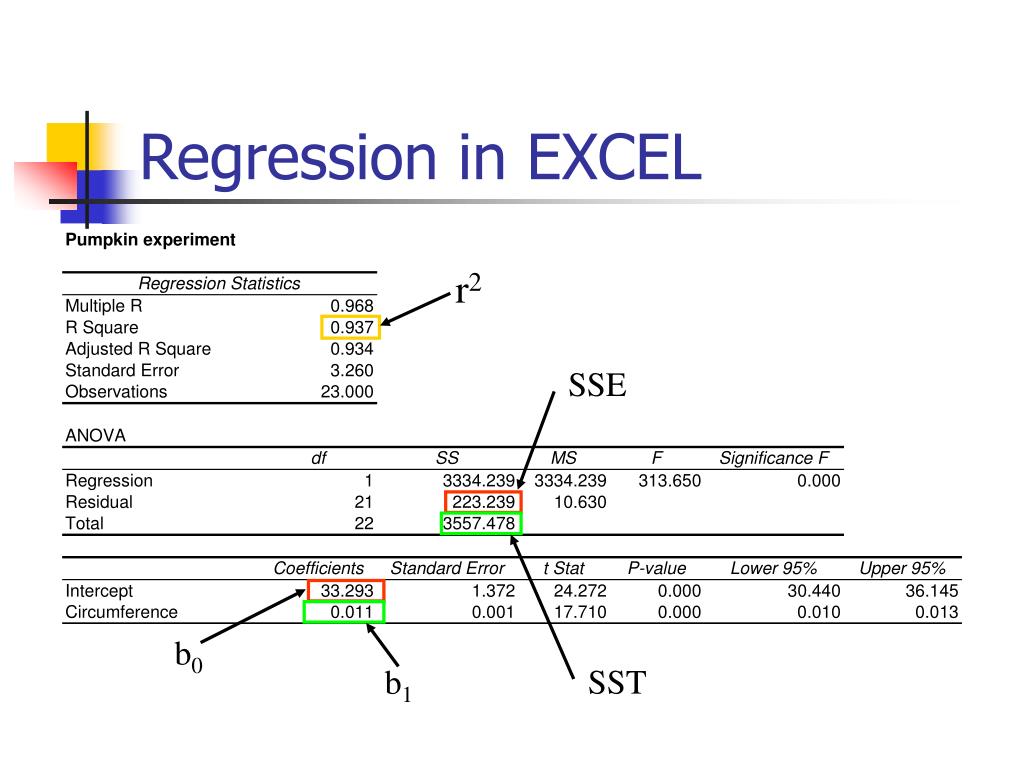

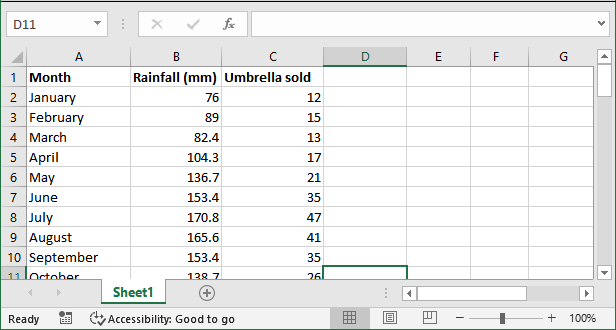

Something we learned back in high school math is now paying dividends in data science! Because this is a simple linear regression, we can think of this as the equation of a line or Y = MX + B where M is the Slope and B is the Y-Intercept. Once you have your slop and intercept, you can plug them into the linear regression equation. Select the appropriate X and y cells in the formula below. You will use two formulas, appropriately named =SLOPE and =INTERCEPT. The second is computing the slope and intercept yourself and using this in our regression formula. The first is that you can directly reference the cell outputted from the regression analysis tool in Excel. Next, we might want to predict new values. For more information on interpreting a residual plot, check out this article: How to use Residual Plots for regression model validation? ResultsĮxcel also provides several plots for visual inspection, such as the Residual Plot and the Line Fit Plot. The F-statistic can tell us if the model is statistically significant, typically when the value is less than 0.05. It also produces and ANOVA table producing values such as the Sum of Squares (SS), Mean Squared Error (MS), and F-statistic. It contains evaluation statistics such as the R-Squared and Adjusted R-Squared. Excel creates a new sheet with the results. Here are some of the most common settings that you should choose to give you a robust output.Īnd finally, we can see the output from our analysis. Start by navigating to the Data Analysis pack, located in the Data tab.įrom here, we can select the Regression tool.Īnd as with most things in Excel, we simply populate the dialog with the right rows and columns and set a few additional options. Let's look at how we can perform the same analysis using Excel but accomplish it in just a few minutes! Setup One of the things about Excel is that it has AMAZING depth in numerical analysis that many users have never discovered. legend ( loc = "best" )įinally! Let's use the Excel application to perform the same regression analysis. fittedvalues, "b-.", label = "OLS" ) ax.

plot ( X, y, "*", label = "Data", color = "g" ) ax. We'll start by importing the packages we need to run the model. It has the closest output to the base R lm package producing a similar summary table. If you're interested in producing similar results in Python, the best way is to use the OLS ( Ordinary Least Squares) model from statsmodels. In the summary output is a way to quickly identify the coefficients that are statistically significant with the notation:Īdditionally, ggplot2 is a powerful visualization library that allows us to easily render the scatterplot and the regression line for a quick inspection. Residual standard error: 296100 on 10 degrees of freedom As they say, a picture is worth a thousand words. Let's look at our data and a scatter plot to understand the relationship between the two. Through this analysis, we'll not only be able to see how strongly the two variables are correlated but also use our coefficients to predict the COGS for a given number of users. Today, our example will illustrate the simple relationship between the number of users in a system versus our Cost of Goods Sold (COGS). The residual is the orthogonal distance between the point in the dataset and the fitted line. In the OLS method, the model's accuracy is measured by the sum of squares for the residuals of each predicted point. It is also common with a simple linear regression model to utilize the Ordinary Least Squares ( OLS) method for fitting the model.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed